Here are two statements that seem like they might contradict each other but don’t:

Teachers typically overestimate the knowledge students have. Whenever I pick out skills x, y, and z that students will need to be successful with an upcoming topic and review and preteach them, I am surprised at how helpful that preparation is.

Teachers typically underestimate the problems students can solve. Whenever I ask students to try and figure something out, I am surprised at the variety and effectiveness of their strategies.

These can both be true! The distinction, to me, comes down to the idea of novices and experts.

An interesting result from research is that novices tend to learn more from direct instruction, while experts tend to learn more from exploration and problem solving. One issue with this research is that the distinction between “novice” and “expert” can feel fuzzy. When does someone go from being a novice to being an expert?

I find it helpful to see the distinction as being about how much knowledge students bring to a situation. Many parts of math are sequential. If a student comes to a topic lacking a foundational skill they may struggle to see the forest for the trees, need more direct guidance on where to focus their mental energy, and need a more structured learning progression. If a student has a lot of knowledge to bring to a subject they can be successful with less guidance.

Here’s an example. I’m teaching 7th grade inequalities right now. One piece of knowledge I might assume students have is fluency with the > and < symbols. They’ve seen them before, but they are never as fluent as they need to be. All students would benefit from a refresher of what the symbols are, what they mean, and how to use them in a few different contexts. If I skip this refresher I am setting students up to be novices. Remembering what the symbol means or puzzling through a new use of it will consume working memory. A big part of working with inequalities is connecting the idea of an inequality to what they already know about equations. If all of the foundational pieces are in place, students can come to the topic as experts because they bring a lot of knowledge and skills that they can apply in a new context. A bit of explicit instruction making those connections clear and they can do a lot more than I might expect. A student with that knowledge can be successful with less guidance and move more quickly to less structured problem-solving. Without the knowledge, students will struggle and need much more explicit and step-by-step instruction to move forward.

Here’s another example. A big topic in 7th grade is proportions. Most students arrive to the unit with tons of knowledge. They can often tell me that, if they drive for 2 hours at 50 miles per hour, they’ve traveled 100 miles, or that if they bike 16 miles in 2 hours they are traveling 8 miles per hour. That reasoning is a huge part of the proportions unit. I am setting students up as experts by drawing on what they already know. Where they are novices is formalizing that knowledge with precise mathematical language that they can then use to solve new problems. I might see students solving problems by finding a unit rate and assume they can apply that understanding elsewhere. Often they can’t, because they haven’t formalized their understanding in a way where they can apply it in an unfamiliar context. I can take their expertise, deliver some explicit instruction connecting it to the ideas of “unit rate” and “constant of proportionality” and help them expand what they can do.

My point is that novice and expert aren’t static labels that we can assign to students and leave in place for weeks or months or years. They are dynamic descriptions of the relationship between a student and what they are learning. When learning inequalities I can set students up to be experts by making sure the foundation is secure, then making clear the connections between what they already know and what they are learning. When learning proportions I can set students up as experts from the start by helping students recognize all the stuff they already know and can apply to problems. Then, I give formal mathematical language to what they already know and help them see how to extend it to new problems, building off of their expertise. I can’t assume they will absorb this language by osmosis; I need to be clear and explicit about it. In both of these situations students move back and forth between being novices and being experts. My instruction changes accordingly. In each situation it’s easy for me to overestimate the knowledge they arrive with, and easy to underestimate what they can figure out if I set them up for success.

Here’s a contrasting case. I also teach circumference and area of circles. This is a topic where I think students are best in the novice position. Circumference is a formula that doesn’t have much understanding behind it — the relationship is an empirical one, a pattern we’ve noticed and can use to solve new problems. That’s a very different type of formula than what students have seen before. The circle area formula does have understanding behind it, but the method of exhaustion necessary to see where the formula comes from is again a totally different way of understanding a formula than anything students have seen before. The unit is mostly about these two formulas, both of which are hard, and are hard in different ways. I do all the fun interactive stuff — we measure circles and find the constant of proportionality, and we count squares in big circles, and we use digital manipulatives to see how a circle can be rearranged into a rectangle. But I don’t pretend that those activities teach students for me. Some very clear, explicit instruction does the job — because students are novices at understanding formulas like these.

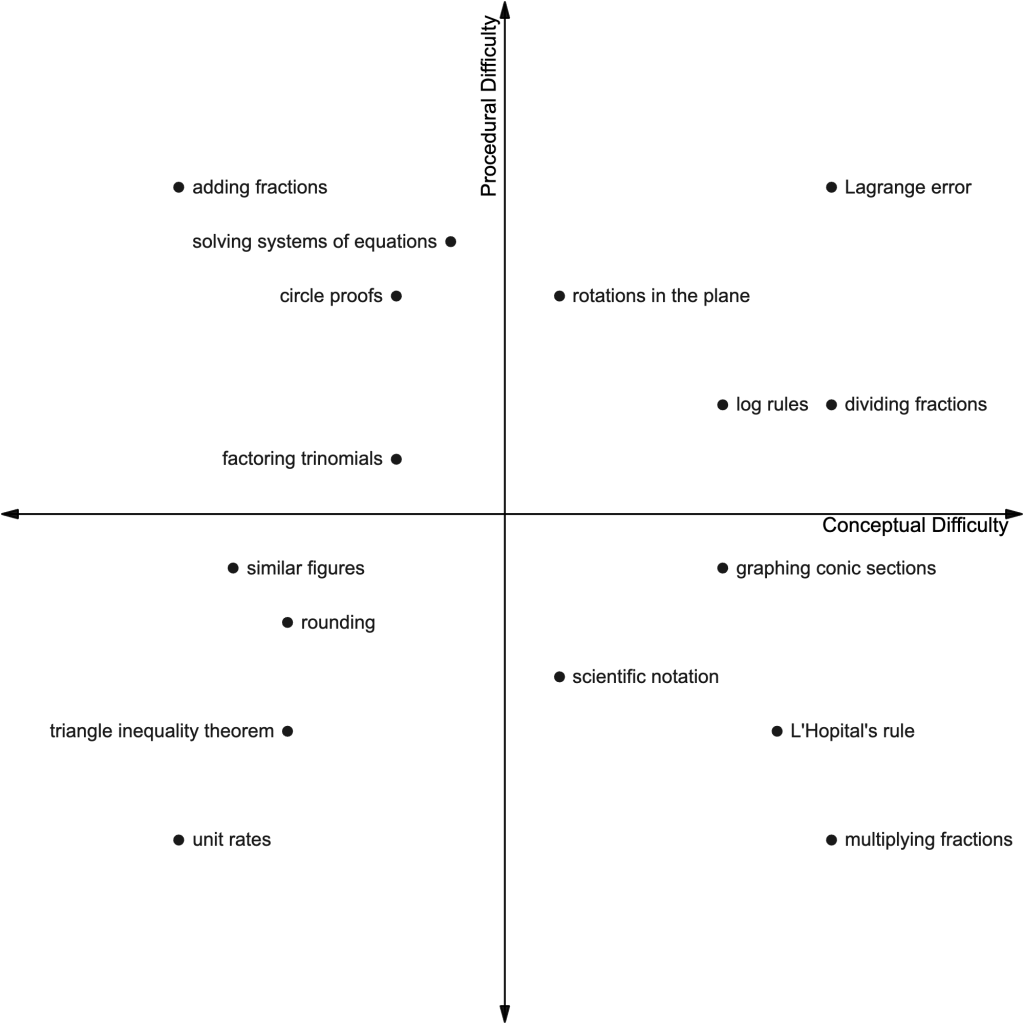

The structure of every topic is different. It’s easy to get lost in generalities during Twitter debates. One mathematical idea might set up a student as a novice early on, and then an expert later. Another idea might build off of a student’s expertise early but then move them into the novice role later on. These roles flow back and forth and blend together, and different students in the same room will fill different roles. There are lots more possibilities, and how a teacher approaches a subject affects this trajectory. I think the broader idea of novices and experts is helpful. But as soon as we start slapping those labels on students there’s a risk that we lose sight of what the label is actually describing.